NEWS

Microsoft taught the neural network to animate faces by recording their speech

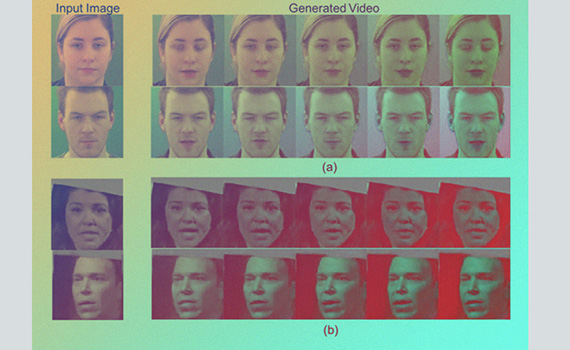

Engineers at Microsoft Research learned how to animate faces in still photos using raw speech recordings from these people. A description of the algorithm is published on arXiv.org.

n the traditional format for animating still images, information is transferred from the video to the desired frame. In this case, a video sequence is used to revive the picture, while often there is only an audio sequence, which must be used.

The algorithm created by Microsoft is context-sensitive. The model distinguishes from the audio clip not only human speech and its phonetic features, but also an emotional range and even extraneous noise. Due to this, various aspects of speech can be superimposed on the video sequence: screaming, indignation, disappointment or joy.

This approach will allow you to impose on a static picture not only direct and unemotional speech, but also live. Now the algorithm understands six basic emotions that it can animate.

To train the neural network, the authors used thousands of video recordings of 34 people speaking with a neutral expression, and 7.4 thousand with different emotional colors. In addition, the authors took 100 thousand excerpts of videos from TED for training.

_1750829846_987d3.jpeg)