NEWS

Artificial Intelligence DeepMind recreated a three-dimensional scene from the photo

The algorithm has learned from many examples to understand where the light source is located and how it affects the surrounding objects.

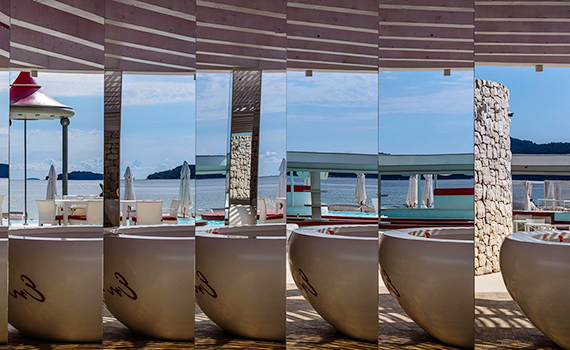

The British company DeepMind has developed a neural network that is capable of representing a three-dimensional environment in a single two-dimensional image.

The system is called the "Generating Query Network" (GQN). To teach AI to analyze, the team showed him images of one scene from different points of view. These images were used by the network in order to understand how objects are changed, and to predict how they will look from other angles. The system also took into account textures and lighting.

One of the workers Danilo Rezende said that the algorithm is trained the same way as people. Having seen a lot of times the same object, he analyzes its characteristics, remembers and uses them in a second interaction. According to him, artificial intelligence is capable of reproducing a whole labyrinth, scanning several photographs taken from within.